AI agents trust whatever data they're handed and fail confidently when it's wrong. Pantomath gives them an upstream data health check before they act.

The Silent Killer of Enterprise AI: Why Your Agents Need a "Data Pulse"

The race to deploy Enterprise AI is on. Organizations are rapidly building agents using tools like Snowflake Cortex, Databricks, or AWS Bedrock to automate decision-making. However, there is a looming architectural flaw that most teams are overlooking: AI agents are incredibly gullible.

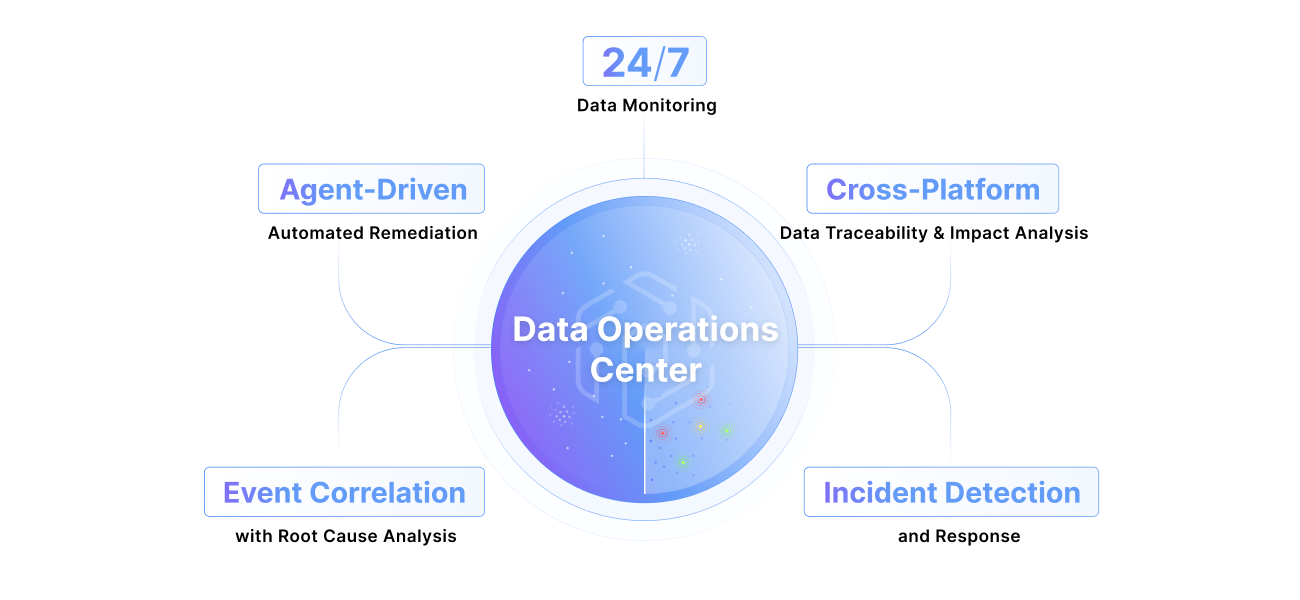

Unlike human data consumers, an AI agent doesn't "sniff test" a chart. It assumes the ground truth provided to it is flawless. Without a dedicated Data Operations Center (DOC) like Pantomath, your AI strategy isn't just at risk—it’s a liability.

The Human Safety Net is Vanishing

In the traditional BI world, humans act as the ultimate data quality filter. A business analyst looks at a Tableau dashboard, notices a suspicious 50% drop in revenue, and pings the data team. While this is reactive and frustrating, the "safety net" of human intuition usually prevents a catastrophic business decision.

But even for humans, fixing the issue is a nightmare. The modern data stack is fragmented. When data is wrong, engineers spend hours in "war rooms," manually reverse-engineering complex pipelines across five different platforms just to find one failed job or a silent schema change.

The AI Agent Blind Spot

Now, remove the human. Imagine an AI agent built on Snowflake Cortex designed to optimize supply chain inventory.

That agent has no visibility into the "upstream" health of the data. It doesn't know that an Informatica integration job failed three hours ago or that a transformation job in DBT skipped a load. The agent sees a successful query response in Snowflake and assumes it has the full picture. It then trains on—and makes decisions based on—hallucinated or incomplete data.

The "Unwinding" Nightmare

The danger in the agentic world is compounded by two factors:

- Impossible Reversal: Once an LLM or an agent is "poisoned" by training on bad data, you cannot simply "undo" that specific knowledge. Often, you have to scrap the progress and retrain, costing thousands in compute and weeks in lost time.

- Confident Failure: An agent won't say "this data looks weird." It will confidently recommend a multi-million dollar procurement order based on stale data, leading to massive financial waste and a total loss of trust in the AI program.

Pantomath: The "Agent-to-Agent" Solution

To solve this, data health must move from a human-readable dashboard to a machine-readable protocol. Pantomath bridges this gap by providing a Data Operations Center (DOC) that speaks directly to your AI.

1. Agent-to-Agent Interaction via MCP

Pantomath doesn't just alert humans; it alerts other agents. Through the Model Context Protocol (MCP) and robust APIs, Pantomath allows your AI agents to check the "Data Pulse" before they execute a task. Your Snowflake Cortex agent can essentially "ask" the Pantomath agent: "Is the upstream lineage for this table healthy?"

2. Real-Time RCA and ETR

If there is an issue, Pantomath provides the AI agent with more than just an error code. It provides:

- Root Cause Analysis (RCA): "The Informatica job failed due to a source credential error."

- Estimated Time to Resolution (ETR): "Data will be refreshed in 45 minutes."

3. Protecting the AI Chat Experience

If an issue isn't resolved in time, Pantomath ensures transparency. Instead of the AI giving a wrong answer, the chat experience can proactively inform the user: "I can see the data for the West Coast region is currently being updated due to a pipeline delay. I will provide a recommendation once the data is 100% verified."

Conclusion: Trust is the New Currency

As we move into an agentic world, the bottleneck isn't the AI model—it’s the reliability of the data pipeline. Pantomath ensures that your AI agents are not flying blind. By enabling agent-to-agent health communication, Pantomath protects your AI investment, ensures confident decision-making, and guarantees that your data is truly AI-Ready.

Stop training your agents on bad data. Start operating with Pantomath.

Keep Reading

.png)

April 2, 2026

The Rise of the Data Reliability Engineer (DRE) and the Future of Data OperationsThe DRE is becoming essential as data systems grow more complex. Learn how this role helps teams reduce incidents, speed up root cause analysis, and keep data on time and accurate.

Read More.png)

February 23, 2026

Why Data Operations Need Standardization in the EnterpriseWhen no one owns Data Operations end to end, incidents repeat and trust erodes. See why standardizing Data Operations is critical for reliable, on-time data.

Read More

January 21, 2026

2026 Predictions for Data Leaders: Where Accountability Moves Next2026 predictions for data leaders on where accountability shifts next, from AI data pipelines and data quality risk to stack consolidation and audited data products.

Read More