When no one owns Data Operations end to end, incidents repeat and trust erodes. See why standardizing Data Operations is critical for reliable, on-time data.

Why Data Operations Need Standardization in the Enterprise

When No One Owns Data Operations, Everyone Pays

Every large enterprise depends on Data Operations, yet very few have clearly defined what that function entails or who truly owns it.

When data breaks, the work still happens; dashboards get fixed, pipelines are rerun, reports eventually land in inboxes. But the way this work gets done is rarely intentional, it is always reactive, fragmented, and driven by whoever happens to have the most context in the moment and for that particular issue.

That improvisation works until it doesn’t.

As data stacks grow more interconnected and more business-critical (especially with the rise of AI), the lack of a standard operating model for Data Operations starts to show up everywhere; incidents take longer to resolve, same failures repeat, teams argue over ownership while the business waits.

The cost is not just technical. It is organizational.

The question no one can answer clearly

Ask a simple question inside most enterprises: Who is actually running Data Operations today?

Not who owns a specific tool or who is on call this week but rather who has a real-time pulse on the health of data across the enterprise and across platforms, pipelines, and shared datasets.

In most organizations, there is no single answer.

Data engineers focus on pipelines. Analytics teams focus on dashboards. Platform teams watch infrastructure. Governance looks at policy and compliance. Each group sees a slice of the system, but no one sees the system as a whole.

The problem shows up the moment something breaks.

A shared pipeline fails. Multiple teams are affected. Some know immediately. Others find out hours later through a broken report or a business escalation. By the time everyone is in the same room, the discussion starts with reconstructing what happened instead of fixing it.

This is not a failure of effort, it is a failure of structure.

How Data Operations became everyone’s job and no one’s role

Data Operations did not emerge as a formal discipline. It grew organically, one tool and one workaround at a time.

As data volumes increased and stacks became more complex, teams added monitoring, alerting, lineage, and quality checks wherever the pain was most visible. Each solution solved a local problem for a specific role.

What never formed was a shared definition of how Data Operations should function end to end.

As a result, job titles vary. Responsibilities shift. During an incident, ownership is fluid. Whoever understands the failing system best becomes the operator, regardless of their actual role.

On a small scale, this is manageable.

At enterprise scale, it becomes a liability.

Without standard roles and shared visibility, teams spend more time coordinating than resolving. Root cause analysis slows down. Incidents repeat because no one can see patterns across tools and teams. Costs rise quietly through duplicated effort and growing support burden.

What standardizing Data Operations really means

Standardizing Data Operations does not mean forcing a single team or a rigid org chart.

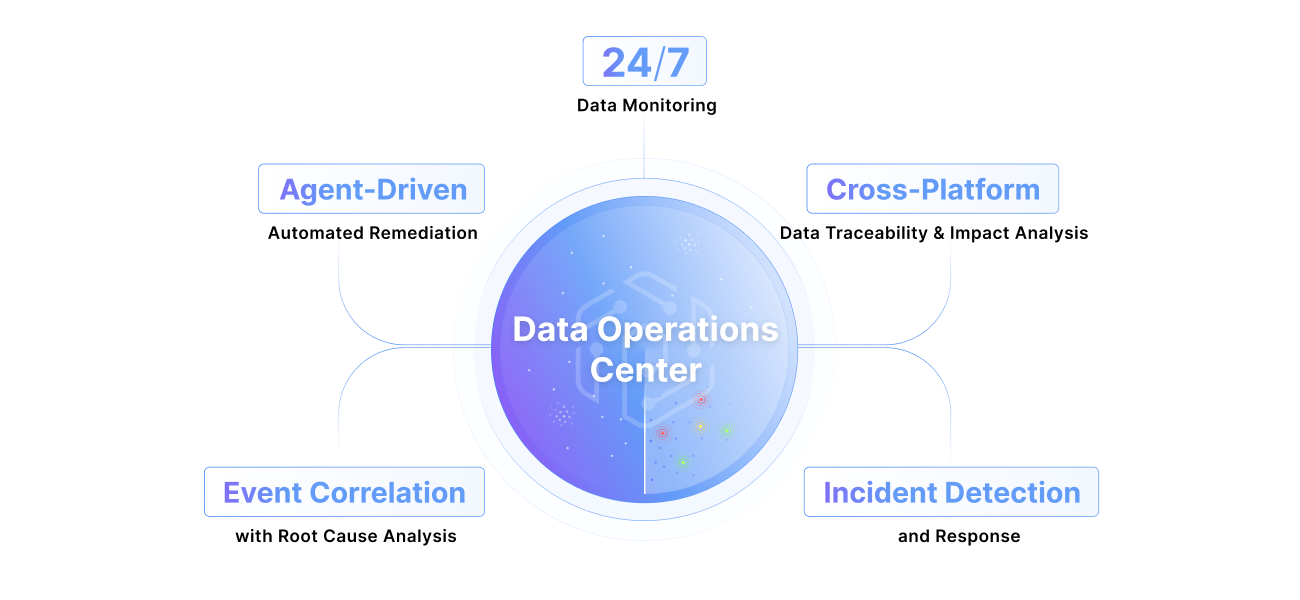

It means giving the organization a shared command center. A place where operational reality is visible across the entire data estate, not fragmented by tool or role.

A true Data Operations Center answers the questions teams ask every day, but rarely in one place:

- What is broken right now?

- Which pipelines and datasets are affected?

- Who depends on them downstream?

- Is this an isolated issue or part of a broader pattern?

- Has this happened before?

When those answers are scattered, teams operate on partial information. When they are centralized, teams can move with clarity and confidence.

From fragmented ownership to shared control

With a real Data Operations Center, roles start to make sense without becoming rigid.

Data engineers can see pipeline failures in the context of downstream business impact. Analytics leaders can understand which reports are at risk before stakeholders escalate. Platform teams can identify recurring patterns instead of chasing individual alerts. Leaders gain a live view of operational health rather than a retrospective summary.

The shift is subtle, but meaningful.

Ownership moves away from individual tools and toward shared outcomes.

On-time data. Faster fixes. Less noise. Lower cost.

Why visibility changes behavior

Most enterprises already generate the signals they need to run Data Operations effectively. The problem is that those signals live in too many places.

When monitoring activity across jobs, datasets, and platforms is consolidated into a single view, patterns become obvious. Noisy assets stand out. Systemic weaknesses are easier to spot. Repeated failures are harder to ignore.

When teams can filter by platform, asset type, or ownership, they stop guessing and start prioritizing. When they can drill directly into event details and related incidents, root cause analysis becomes routine instead of heroic.

This is how Data Operations shifts from reactive to operational. Not by just adding another tool, but instead creating a command center.

The standard is forming now

Data Operations is becoming a first-class function, whether organizations explicitly define it or not. The companies that do will resolve incidents faster, reduce operational drag, and build trust in their data.

The rest will continue to improvise, and continue paying the hidden cost. If data runs the business, it deserves a real operating model where the first step is seeing the entire system clearly and accurately.

Next step. Take a hard look at how Data Operations actually runs inside your organization today, and decide whether improvisation is still good enough.

Keep Reading

.png)

May 8, 2026

The Silent Killer of Enterprise AI: Why Your Agents Need a "Data Pulse"AI agents trust whatever data they're handed and fail confidently when it's wrong. Pantomath gives them an upstream data health check before they act.

Read More.png)

April 2, 2026

The Rise of the Data Reliability Engineer (DRE) and the Future of Data OperationsThe DRE is becoming essential as data systems grow more complex. Learn how this role helps teams reduce incidents, speed up root cause analysis, and keep data on time and accurate.

Read More

January 21, 2026

2026 Predictions for Data Leaders: Where Accountability Moves Next2026 predictions for data leaders on where accountability shifts next, from AI data pipelines and data quality risk to stack consolidation and audited data products.

Read More