The DRE is becoming essential as data systems grow more complex. Learn how this role helps teams reduce incidents, speed up root cause analysis, and keep data on time and accurate.

The Rise of the Data Reliability Engineer (DRE) and the Future of Data Operations

As enterprises begin to recognize that Data Operations needs structure, a new role is quietly emerging across modern data teams.

The Data Reliability Engineer, often called a DRE, is becoming the operational golden child of enterprise data environments. While the title is still gaining traction, the responsibilities behind it already exist in many organizations. Someone is already watching pipelines, investigating failures, tracing root causes, and coordinating fixes when critical data systems break.

The difference today is that these responsibilities are starting to become formalized.

As data becomes more critical to revenue forecasting, operational reporting, and executive decision-making, organizations are realizing that data reliability cannot remain an informal responsibility shared across multiple teams. It requires a dedicated operational function that focuses on keeping data systems stable, observable, and responsive when issues occur.

That function is increasingly taking shape through the Data Reliability Engineer role.

Why the Data Reliability Engineer Role Is Emerging

Modern data stacks are dramatically more complex than they were even a few years ago. A single enterprise pipeline may depend on orchestration tools, cloud warehouses, transformation frameworks, streaming systems, and dozens of upstream applications. At the same time, downstream consumers have multiplied, with dashboards, operational reports, machine learning models, and business applications all relying on the same data assets.

This level of interconnectedness means that a single pipeline failure can cascade across the organization.

When failures occur, someone must determine what broke, understand how it affects downstream assets, and coordinate the response across teams. In many enterprises, that responsibility still falls to whichever engineer happens to have the most context at the moment.

The Data Reliability Engineer role exists to remove that ambiguity.

Instead of treating reliability work as a side responsibility, a DRE focuses specifically on the operational health of data systems. This includes monitoring pipeline behavior, investigating incidents, performing root cause analysis, and ensuring that failures are resolved quickly and prevented from repeating.

In many ways, the role mirrors the rise of Site Reliability Engineering in infrastructure and application environments. As software systems became more complex, SRE teams emerged to manage uptime, reliability, and operational response. The same shift is now happening in the world of data.

Data reliability is becoming too important to manage informally.

What a Data Reliability Engineer Actually Does

Although the title may vary from organization to organization, the responsibilities behind the DRE role tend to follow a similar pattern.

A Data Reliability Engineer maintains visibility into the health of enterprise data pipelines and datasets. They investigate anomalies and failures when alerts fire. They trace issues through upstream and downstream dependencies to identify the root cause. They coordinate communication with affected teams and ensure that fixes are implemented correctly.

Equally important, a DRE works to reduce the frequency of incidents over time.

By identifying recurring failure patterns, unreliable pipelines, and noisy monitoring signals, they can prioritize improvements that reduce operational noise and improve stability. This allows the organization to move from constant reactive troubleshooting toward predictable and resilient Data Operations.

The role ultimately exists to ensure that data arrives when the business expects it and behaves the way the business trusts it to behave.

However, as many organizations quickly discover, performing this work manually becomes extremely difficult at enterprise scale.

The Limits of Manual Data Operations

Even experienced engineers struggle to maintain operational awareness across modern data environments.

Monitoring alerts often originate from multiple systems, each with different context. Investigating a pipeline failure may require checking orchestration logs, querying warehouse jobs, reviewing transformation steps, and tracing downstream dashboards that depend on the data. Each step requires navigating separate tools and assembling fragments of information.

As data environments grow, this investigative process becomes slower and more complex.

Engineers spend increasing amounts of time gathering context before they can even begin diagnosing the issue itself. Meanwhile, stakeholders are waiting for updates, and other teams may not yet realize that their own data assets are affected.

This is where the operational model begins to strain.

Even with a dedicated Data Reliability Engineer role, the volume of signals and the complexity of modern data stacks make it difficult for any single individual or team to maintain complete visibility.

What the role needs is operational support. This is why Pantomath exists.

Why the Next Step is an AI-Powered DRE Agent

Just as security teams use automated systems to detect threats and infrastructure teams use automated observability to monitor application health, Data Operations increasingly requires automation to maintain reliability at scale.

This is where the concept of a DRE Agent begins to change how enterprises manage data reliability.

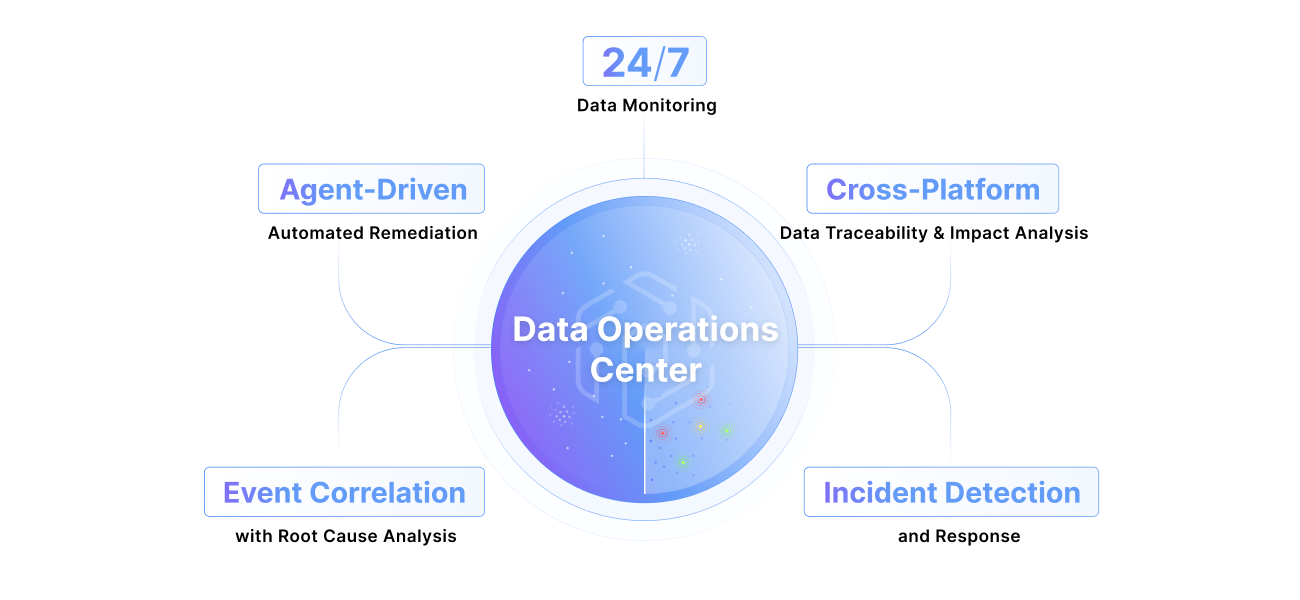

Rather than requiring engineers to manually gather signals from across the data stack, a DRE Agent continuously monitors pipelines, datasets, and operational events. It correlates activity across platforms and identifies patterns that indicate potential failures or emerging reliability risks.

When an issue occurs, the agent can immediately surface the context engineers need to understand what happened.

Instead of starting from scratch, the DRE receives a structured view of the incident. They can see which pipeline failed, which datasets are affected, and which downstream assets rely on that data. They can review related incidents and historical patterns that may point toward the underlying cause.

This dramatically shortens the time required to perform root cause analysis.

More importantly, it allows Data Operations teams to shift from reactive investigation to proactive reliability management.

The Future of Data Operations Is Reliability Engineering

As enterprises continue to scale their data platforms, the discipline of Data Operations will inevitably evolve.

Organizations will move away from ad hoc monitoring and fragmented ownership toward structured operational models that prioritize reliability and accountability. Within that model, the Data Reliability Engineer role will play a central part in ensuring that data systems remain dependable as complexity grows.

However, reliability engineering cannot succeed through manual effort alone.

To manage modern data environments effectively, Data Operations teams need intelligent systems that can monitor activity, surface context, and guide investigation in real time.

The rise of the Data Reliability Engineer is therefore closely tied to the rise of automation.

Together, they represent the next stage of enterprise Data Operations, where reliability is no longer an afterthought but a core operational capability that ensures the business always has access to trusted, on-time data.

—

Ready to move from reactive monitoring to autonomous data operations? Explore Pantomath’s Data Operations Center or request a demo.

—

Stay tuned for more on how Pantomath autonomously resolves issues and the specifics around our AI Agents.

Keep Reading

.png)

May 8, 2026

The Silent Killer of Enterprise AI: Why Your Agents Need a "Data Pulse"AI agents trust whatever data they're handed and fail confidently when it's wrong. Pantomath gives them an upstream data health check before they act.

Read More.png)

February 23, 2026

Why Data Operations Need Standardization in the EnterpriseWhen no one owns Data Operations end to end, incidents repeat and trust erodes. See why standardizing Data Operations is critical for reliable, on-time data.

Read More

January 21, 2026

2026 Predictions for Data Leaders: Where Accountability Moves Next2026 predictions for data leaders on where accountability shifts next, from AI data pipelines and data quality risk to stack consolidation and audited data products.

Read More